Forward Scaling for Multicore Performance

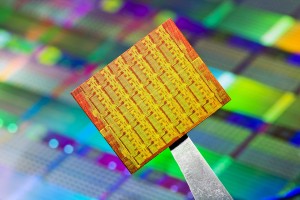

The era of sequential, single-threaded software development deployed to a uniprocessor machine is rapidly fading into history. Nearly all computers sold today have at least two, if not four cores – and will have eight in the near future. Intel announced last month the successful production and testing of a new 48-core research processor which will be made available to industry and academia for research and development of manycore parallel software developer tools and languages.

In the near future users of high performance software in finance, bio-informatics, or GIS will expect their applications to scale with core count, and software that fails to do so will either need to be rewritten or abandoned. To future-proof performance-sensitive software, code written today needs to be multicore aware, and scale automatically to all available cores – this is the key idea behind forward scaling software. If Moore’s ‘law’ is to be sustained into the future, hardware scalability must be joined with a similar shift in software. This fundamental shift in computing and application development, termed the ‘Manycore Shift’ by Microsoft, is an evolutionary shift that software developers must appreciate and adapt to in order to create long-living scalable applications.

CenterSpace’s Forward Scaling Strategy

This project of creating forward scaling software can sound daunting, but for many application developers it can be reduced to choosing the right languages & libraries for the computationally demanding portions of their application. If an application’s performance-sensitive components are forward scaling, so goes the application. At CenterSpace we are very performance sensitive and are working to insure that our users benefit from forward scaling behavior. Linear scaling with core number cannot always be achieved, but in the numerical computational domain we can frequently come close to this ideal.

Here are some parallel computing technologies that we are looking at adopting to ensure we meet this goal.

Microsoft’s Task Parallel Library

Microsoft research released the Task Parallel Library about two years ago, and improved upon it most recently in June of 2008. With this experience, Microsoft will now include task base parallelism functionality in the March 2010 release of .NET 4.

These parallel extensions will reside in a new static class Parallel inside of the System.Threading.Tasks namespace. While this framework provides an extensible set of classes for complex task parallelism problems, the common use cases will include the typical variants of the lowly loop. Here’s a very simple example of computing the square of a vector contrasting sequential and parallel code patterns.

// Single threaded looping

for(int i = 0; i < n; i++)

s[i] = v[i]*v[i];

// Using the Parallel class & anonymous delegates

Double[] s = new Double[n];

Parallel.For(0, n, delegate (int i)

{

s[i] = v[i]*v[i];

} );

// Using the Parallel class & lambda expressions

Double[] s = new Double[n];

Parallel.For(0, n, (i) => s[i] = v[i]*v[i]);

Note how concise the parallel looping code can be written with lambda expressions. If you haven’t yet gotten excited about lambda expressions, hopefully you are now! Also, outer variables can be referenced from inside the lambda expression (or the anonymous delegate) making code ports fairly simple once the inherent parallelism is recognized.

Intel’s Ct Data Parallel VM

CenterSpace already leverages the Intel’s forward scaling implementation’s of BLAS and LAPACK in our NMath and NMath Stats libraries, so we are very attuned to Intel’s efforts to bring new tools to programmers to leverage their multicore chips. Intel has a long history of supporting the efforts of developers in creating and debugging multithreaded applications by offering solid libraries, compilers, and debuggers. Intel has a major initiative called the Tera-Scale Computing Research Program to push forward all areas of high performance computing spanning from hardware to software.

At CenterSpace we are particularly interested in a new

This is a fundamentally different approach to Microsoft’s task-based parallel language classes. In the example above, note that the computation in the lambda expression must be independent for every i. This places a burden on the programmer to identify and create these parallel lambda expressions; the data parallel approach frees the programmer of this significant burden. Computing the dot product in C#, leveraging the C front end to the data parallel VM, might look something like:

// Using a Ct based parallel class & lamda expressions

CtVector v1 = new CtVector(1,2, ... ,49999,50000);

CtVector v2 = new CtVector(50000,49999, ... ,2,1);

int dot_product = CtParallel.AddReduce(v1*v2);

Note that the dot product operation is not easily converted to a task base parallel implementation since each operation in the lambda expression is not independent, but instead requires both a multiplication and a summation (reduction). However, there is significant data parallelism in the vector product and reduction steps that should exhibit near linear scaling with processor count using this Ct based implementation.

As with task based parallelism, the data parallelism approach has its drawbacks. First, the data must reside not in native types but in special Ct (CtVector in this example) containers that the data parallel engine knows how to divide and reassemble. This is generally a minor issue, however if your data isn’t wont to residing in vectors or matrices at all, data parallelism may not be an option for leveraging multi-core hardware – task base parallelism may be the answer. Both data parallelism and task parallelism approaches have their strengths and weaknesses and application domains where each shines. At CenterSpace, since our focus is on numerical computation, our data typically resides comfortably in vectors so we expect Ct’s data parallel approach to be an important tool in our future.

Cloud Computing with EC2 & Azure

Cloud computing, as it is typically thought of as a room full of servers and disk drives, is not conceptually that different from a single computer with many cores. In fact, Intel likes to refer to their new 48-core processor as a single-chip cloud computer. In both cases the central goals are performance and scalability.

Since many of CenterSpace’s customers have high computational demands and often process large datasets, we have started pushing some NMath functionality out into the cloud. A powerful & computationally demanding data clustering algorithm called Non-negative Matrix Factorization was our first NMath port to the cloud. With this algorithm now residing in the cloud, customer’s can access this high-performace NMF implementation from virtually any programming language, cutting their run times from days to hours. We’ll be blogging more on our cloud computing efforts in the near future.

Happy Computing,

-Paul

References & Additional Resources

- Toub, Stephen. Patterns of Parallel Programming. Whitepaper, Microsoft Corporation, 2009.

- The Manycore Shift: Microsoft Parallel Computing Initiative Ushers Computing into the Next Era, 2007.

- An in-depth technical article on Intel’s numeric intensive, multi-core ready, technologies, November 2007.